How Large Are The Memory Registers In A 32-bit Os?

Main Memory

References:

- Abraham Silberschatz, Greg Gagne, and Peter Baer Galvin, "Operating System Concepts, Ninth Edition ", Affiliate 8

8.ane Background

- Evidently memory accesses and retention management are a very important part of modern computer performance. Every educational activity has to be fetched from memory before it can be executed, and nigh instructions involve retrieving data from retentivity or storing data in retention or both.

- The advent of multi-tasking OSes compounds the complication of memory management, because because as processes are swapped in and out of the CPU, so must their lawmaking and data be swapped in and out of memory, all at high speeds and without interfering with any other processes.

- Shared retentiveness, virtual retention, the classification of memory as read-merely versus read-write, and concepts similar copy-on-write forking all further complicate the effect.

8.1.1 Basic Hardware

- It should be noted that from the memory fries signal of view, all retentivity accesses are equivalent. The memory hardware doesn't know what a particular part of memory is beingness used for, nor does it care. This is virtually true of the OS too, although non entirely.

- The CPU tin only access its registers and main memory. It cannot, for example, make direct access to the hard drive, and then whatsoever data stored in that location must commencement be transferred into the main memory chips earlier the CPU can work with it. ( Device drivers communicate with their hardware via interrupts and "retention" accesses, sending short instructions for case to transfer data from the hard bulldoze to a specified location in main retention. The disk controller monitors the jitney for such instructions, transfers the data, and so notifies the CPU that the data is there with another interrupt, merely the CPU never gets direct access to the disk. )

- Memory accesses to registers are very fast, generally one clock tick, and a CPU may exist able to execute more than one automobile teaching per clock tick.

- Retentivity accesses to primary memory are comparatively tiresome, and may take a number of clock ticks to complete. This would require intolerable waiting by the CPU if it were non for an intermediary fast memory cache built into most modernistic CPUs. The basic idea of the enshroud is to transfer chunks of retention at a time from the principal memory to the enshroud, and and so to admission private retentiveness locations i at a time from the cache.

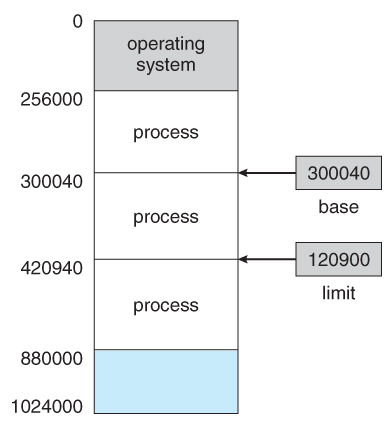

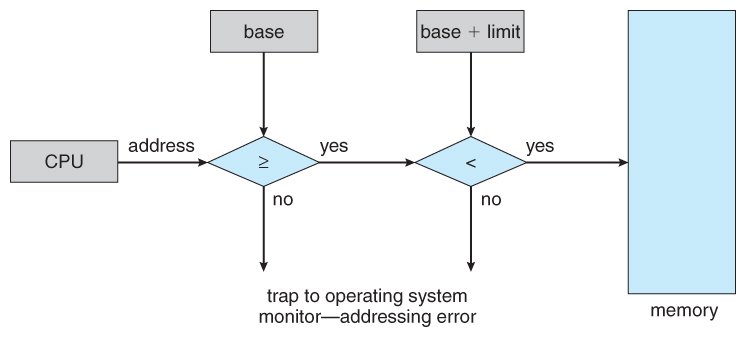

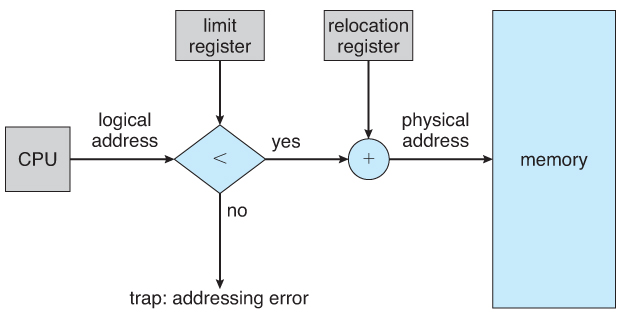

- User processes must exist restricted so that they merely access retentivity locations that "vest" to that particular process. This is usually implemented using a base annals and a limit register for each process, equally shown in Figures 8.1 and 8.2 below. Every retentiveness admission fabricated by a user process is checked against these 2 registers, and if a memory access is attempted exterior the valid range, and so a fatal error is generated. The Os obviously has access to all existing memory locations, as this is necessary to swap users' code and information in and out of memory. Information technology should too be obvious that changing the contents of the base and limit registers is a privileged action, allowed only to the Bone kernel.

Figure 8.one - A base of operations and a limit register ascertain a logical addresss space

Figure 8.2 - Hardware address protection with base and limit registers8.1.2 Address Binding

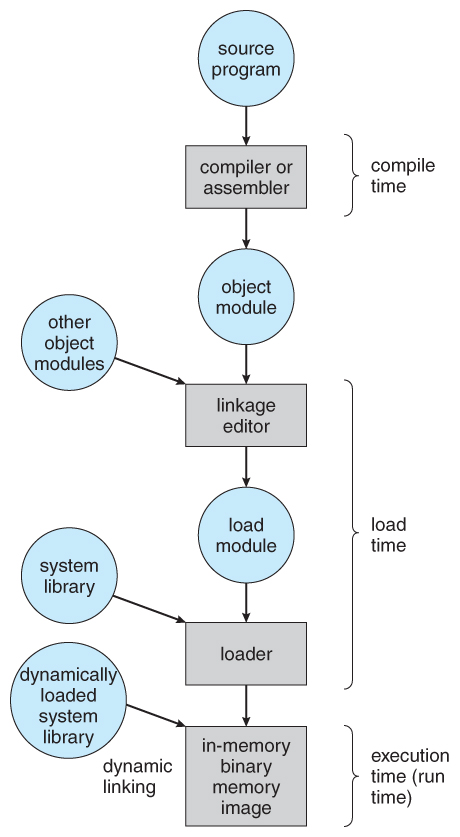

- User programs typically refer to retentivity addresses with symbolic names such as "i", "count", and "averageTemperature". These symbolic names must be mapped or bound to physical memory addresses, which typically occurs in several stages:

- Compile Time - If it is known at compile time where a program will reside in concrete retentiveness, so accented code tin be generated past the compiler, containing actual physical addresses. Yet if the load accost changes at some subsequently fourth dimension, so the program will have to be recompiled. DOS .COM programs utilise compile fourth dimension binding.

- Load Time - If the location at which a programme will be loaded is not known at compile time, and then the compiler must generate relocatable code , which references addresses relative to the beginning of the program. If that starting accost changes, so the programme must be reloaded but not recompiled.

- Execution Time - If a plan tin be moved around in retentivity during the grade of its execution, so binding must exist delayed until execution time. This requires special hardware, and is the method implemented by most modern OSes.

- Figure eight.3 shows the various stages of the binding processes and the units involved in each stage:

Figure 8.3 - Multistep processing of a user programme8.1.3 Logical Versus Physical Address Space

- The accost generated past the CPU is a logical address , whereas the address actually seen by the memory hardware is a concrete address .

- Addresses jump at compile time or load fourth dimension have identical logical and physical addresses.

- Addresses created at execution time, still, have different logical and concrete addresses.

- In this example the logical accost is also known equally a virtual address , and the two terms are used interchangeably past our text.

- The ready of all logical addresses used by a plan composes the logical address space , and the ready of all corresponding physical addresses composes the physical accost space.

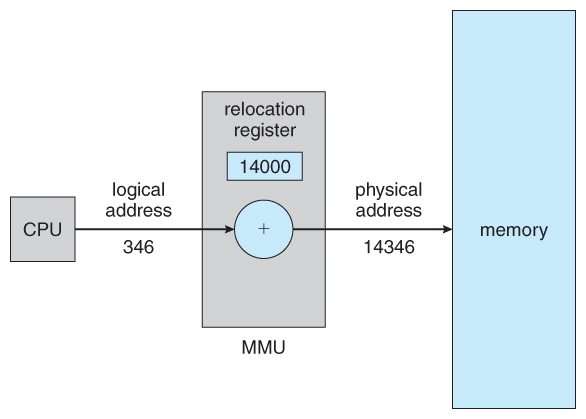

- The run time mapping of logical to physical addresses is handled by the memory-direction unit, MMU .

- The MMU can have on many forms. One of the simplest is a modification of the base-register scheme described before.

- The base of operations register is now termed a relocation register , whose value is added to every memory request at the hardware level.

- Note that user programs never meet physical addresses. User programs work entirely in logical accost space, and whatsoever memory references or manipulations are done using purely logical addresses. Only when the address gets sent to the concrete retentiveness chips is the concrete memory address generated.

Effigy 8.4 - Dynamic relocation using a relocation registereight.1.4 Dynamic Loading

- Rather than loading an entire program into retentiveness at one time, dynamic loading loads up each routine as it is called. The advantage is that unused routines need never be loaded, reducing total retention usage and generating faster program startup times. The downside is the added complexity and overhead of checking to see if a routine is loaded every time information technology is chosen and so and so loading it upward if it is non already loaded.

8.1.5 Dynamic Linking and Shared Libraries

- With static linking library modules get fully included in executable modules, wasting both disk space and master memory usage, because every programme that included a sure routine from the library would accept to have their own copy of that routine linked into their executable lawmaking.

- With dynamic linking , all the same, only a stub is linked into the executable module, containing references to the actual library module linked in at run time.

- This method saves deejay space, because the library routines do non need to exist fully included in the executable modules, but the stubs.

- We will also learn that if the lawmaking section of the library routines is reentrant , ( pregnant it does non modify the code while it runs, making information technology safe to re-enter information technology ), then main memory can be saved by loading simply one copy of dynamically linked routines into memory and sharing the code amongst all processes that are meantime using it. ( Each process would have their ain copy of the data section of the routines, only that may be small relative to the code segments. ) Plain the Os must manage shared routines in memory.

- An added benefit of dynamically linked libraries ( DLLs , as well known equally shared libraries or shared objects on UNIX systems ) involves easy upgrades and updates. When a plan uses a routine from a standard library and the routine changes, then the program must exist re-built ( re-linked ) in order to incorporate the changes. All the same if DLLs are used, and so as long every bit the stub doesn't change, the programme can be updated merely by loading new versions of the DLLs onto the organisation. Version information is maintained in both the plan and the DLLs, so that a program can specify a particular version of the DLL if necessary.

- In practice, the offset fourth dimension a programme calls a DLL routine, the stub will recognize the fact and will supplant itself with the bodily routine from the DLL library. Farther calls to the aforementioned routine volition access the routine directly and not incur the overhead of the stub access. ( Following the UML Proxy Pattern . )

- ( Additional data regarding dynamic linking is available at http://www.iecc.com/linker/linker10.html )

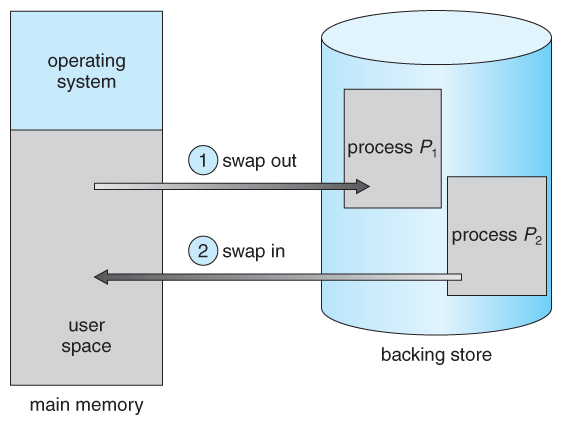

eight.2 Swapping

- A process must be loaded into memory in order to execute.

- If in that location is not enough memory available to keep all running processes in retention at the same time, then some processes who are not currently using the CPU may have their memory swapped out to a fast local disk called the backing store.

eight.2.ane Standard Swapping

- If compile-time or load-time address binding is used, and then processes must be swapped back into the aforementioned memory location from which they were swapped out. If execution fourth dimension bounden is used, and then the processes can be swapped dorsum into whatever available location.

- Swapping is a very dull procedure compared to other operations. For instance, if a user process occupied 10 MB and the transfer charge per unit for the backing store were 40 MB per second, and then it would have i/4 2nd ( 250 milliseconds ) just to practise the information transfer. Adding in a latency lag of eight milliseconds and ignoring head seek time for the moment, and further recognizing that swapping involves moving erstwhile data out as well as new information in, the overall transfer fourth dimension required for this swap is 512 milliseconds, or over half a 2d. For efficient processor scheduling the CPU time slice should be significantly longer than this lost transfer fourth dimension.

- To reduce swapping transfer overhead, information technology is desired to transfer as trivial information as possible, which requires that the system know how much retention a process is using, as opposed to how much it might use. Programmers tin assist with this by freeing upward dynamic memory that they are no longer using.

- Information technology is important to bandy processes out of memory only when they are idle, or more to the point, only when there are no pending I/O operations. ( Otherwise the pending I/O functioning could write into the wrong procedure'due south memory infinite. ) The solution is to either swap only totally idle processes, or exercise I/O operations only into and out of OS buffers, which are then transferred to or from process's primary retentivity as a second step.

- Nearly modern OSes no longer utilise swapping, because it is too boring and there are faster alternatives available. ( e.g. Paging. ) Nevertheless some UNIX systems will still invoke swapping if the organisation gets extremely total, so discontinue swapping when the load reduces again. Windows iii.1 would employ a modified version of swapping that was somewhat controlled by the user, swapping procedure'due south out if necessary so only swapping them back in when the user focused on that item window.

Figure viii.five - Swapping of ii processes using a disk as a backing shop

eight.2.2 Swapping on Mobile Systems ( New Department in ninth Edition )

- Swapping is typically not supported on mobile platforms, for several reasons:

- Mobile devices typically employ flash memory in place of more spacious hard drives for persistent storage, so at that place is not equally much space available.

- Flash memory can simply be written to a limited number of times before it becomes unreliable.

- The bandwidth to wink retentiveness is as well lower.

- Apple'southward IOS asks applications to voluntarily free up memory

- Read-simply data, due east.g. code, is merely removed, and reloaded afterward if needed.

- Modified information, east.thousand. the stack, is never removed, but . . .

- Apps that neglect to free upward sufficient memory tin exist removed by the Bone

- Android follows a like strategy.

- Prior to terminating a process, Android writes its application land to flash memory for quick restarting.

8.3 Contiguous Memory Resource allotment

- One approach to memory direction is to load each process into a contiguous space. The operating system is allocated space first, usually at either low or high retentivity locations, and then the remaining available memory is allocated to processes as needed. ( The Bone is usually loaded low, because that is where the interrupt vectors are located, simply on older systems office of the OS was loaded high to make more room in depression retentiveness ( inside the 640K barrier ) for user processes. )

eight.3.1 Retentivity Protection ( was Retentiveness Mapping and Protection )

- The organisation shown in Figure viii.half dozen below allows protection against user programs accessing areas that they should not, allows programs to be relocated to different memory starting addresses every bit needed, and allows the memory space devoted to the Os to grow or shrink dynamically as needs change.

Figure 8.6 - Hardware support for relocation and limit registers8.3.2 Retention Allocation

- One method of allocating contiguous retention is to separate all available memory into equal sized partitions, and to assign each procedure to their own sectionalization. This restricts both the number of simultaneous processes and the maximum size of each process, and is no longer used.

- An alternate approach is to go on a list of unused ( free ) retentivity blocks ( holes ), and to find a hole of a suitable size whenever a process needs to be loaded into memory. At that place are many different strategies for finding the "best" allocation of memory to processes, including the three most commonly discussed:

- First fit - Search the list of holes until i is plant that is big enough to satisfy the request, and assign a portion of that hole to that process. Whatever fraction of the hole not needed by the request is left on the free list every bit a smaller hole. Subsequent requests may start looking either from the beginning of the listing or from the point at which this search ended.

- Best fit - Allocate the smallest hole that is large enough to satisfy the asking. This saves big holes for other process requests that may need them afterward, but the resulting unused portions of holes may be too small to exist of any use, and volition therefore be wasted. Keeping the free list sorted can speed upwardly the procedure of finding the right hole.

- Worst fit - Allocate the largest pigsty available, thereby increasing the likelihood that the remaining portion will exist usable for satisfying future requests.

- Simulations show that either commencement or best fit are better than worst fit in terms of both time and storage utilization. Showtime and best fits are about equal in terms of storage utilization, but showtime fit is faster.

eight.3.3. Fragmentation

- All the memory resource allotment strategies suffer from external fragmentation , though get-go and best fits experience the problems more then than worst fit. External fragmentation ways that the available memory is broken up into lots of little pieces, none of which is big enough to satisfy the next retention requirement, although the sum total could.

- The amount of retentivity lost to fragmentation may vary with algorithm, usage patterns, and some design decisions such as which end of a hole to allocate and which end to save on the free list.

- Statistical analysis of commencement fit, for instance, shows that for N blocks of allocated memory, another 0.five N volition be lost to fragmentation.

- Internal fragmentation also occurs, with all memory allotment strategies. This is acquired by the fact that retention is allocated in blocks of a fixed size, whereas the bodily memory needed volition rarely be that verbal size. For a random distribution of memory requests, on the average 1/2 cake volition be wasted per memory request, because on the average the concluding allocated block will be only one-half full.

- Note that the same result happens with difficult drives, and that modern hardware gives usa increasingly larger drives and memory at the expense of ever larger block sizes, which translates to more than memory lost to internal fragmentation.

- Some systems use variable size blocks to minimize losses due to internal fragmentation.

- If the programs in memory are relocatable, ( using execution-time accost binding ), then the external fragmentation trouble can be reduced via compaction , i.e. moving all processes down to one end of physical retention. This only involves updating the relocation register for each procedure, as all internal work is washed using logical addresses.

- Another solution as we will see in upcoming sections is to allow processes to use non-contiguous blocks of physical memory, with a separate relocation annals for each block.

8.4 Segmentation

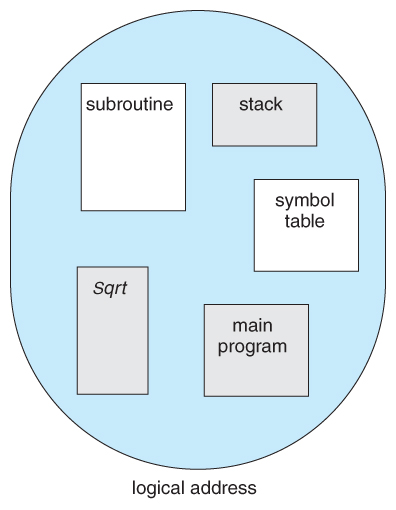

8.iv.1 Bones Method

- Nigh users ( programmers ) practise non retrieve of their programs as existing in one continuous linear address space.

- Rather they tend to think of their memory in multiple segments , each dedicated to a particular use, such equally code, data, the stack, the heap, etc.

- Retentivity segmentation supports this view by providing addresses with a segment number ( mapped to a segment base address ) and an first from the beginning of that segment.

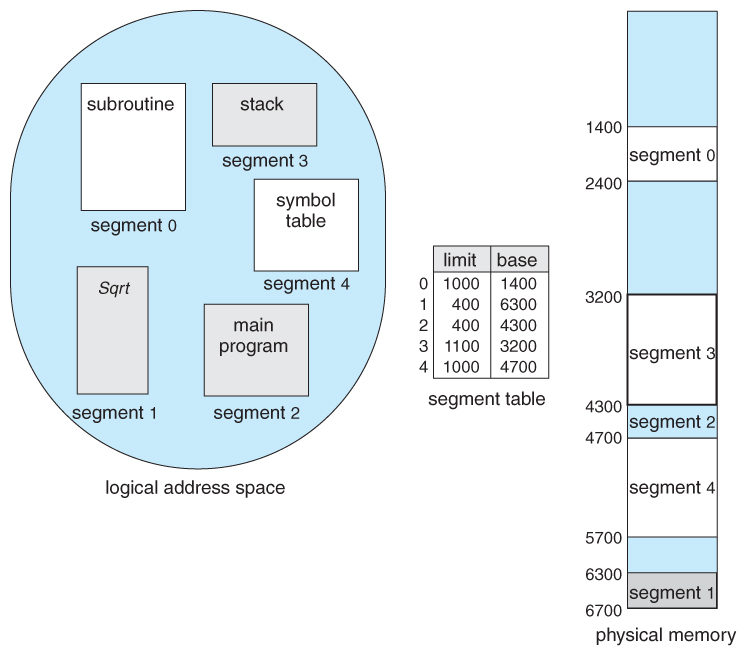

- For example, a C compiler might generate 5 segments for the user code, library code, global ( static ) variables, the stack, and the heap, every bit shown in Effigy 8.7:

Figure 8.seven Programmer's view of a program.

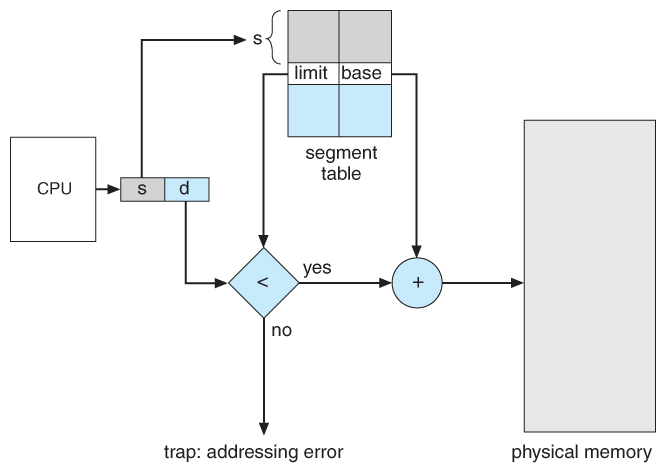

8.4.ii Segmentation Hardware

- A segment tabular array maps segment-outset addresses to physical addresses, and simultaneously checks for invalid addresses, using a system similar to the page tables and relocation base registers discussed previously. ( Note that at this signal in the discussion of sectionalisation, each segment is kept in contiguous memory and may be of different sizes, but that partition tin as well exist combined with paging as we shall see shortly. )

Effigy eight.8 - Sectionalisation hardware

Figure 8.9 - Example of division

8.v Paging

- Paging is a memory management scheme that allows processes concrete retentiveness to be discontinuous, and which eliminates problems with fragmentation by allocating retentivity in equal sized blocks known as pages .

- Paging eliminates virtually of the problems of the other methods discussed previously, and is the predominant memory direction technique used today.

8.5.1 Basic Method

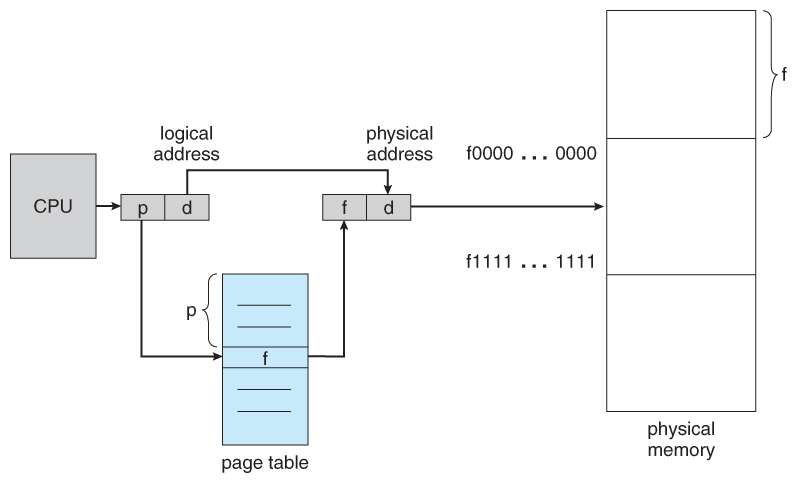

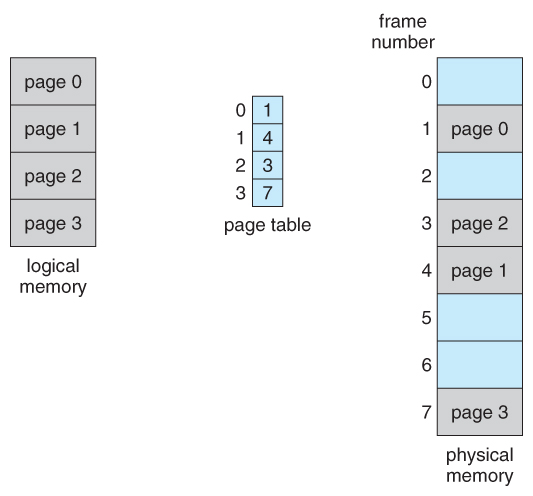

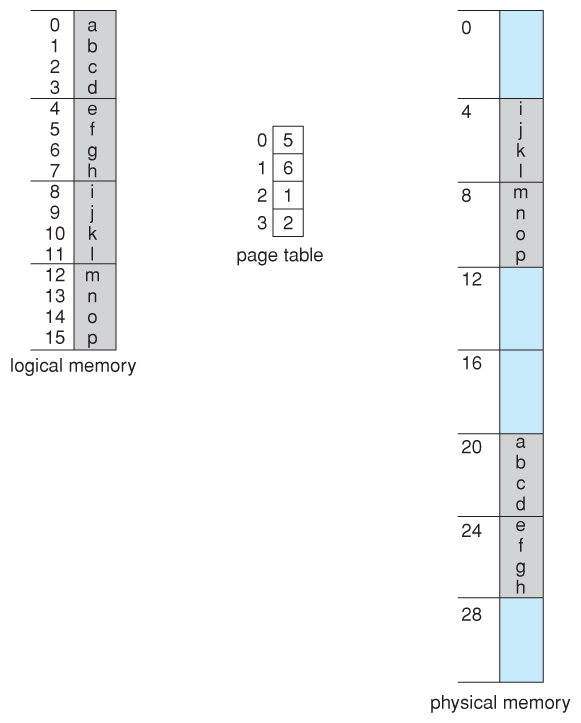

- The basic idea behind paging is to divide concrete memory into a number of equal sized blocks chosen frames , and to separate a programs logical memory space into blocks of the same size chosen pages.

- Any page ( from any process ) can be placed into any bachelor frame.

- The page table is used to await up what frame a particular page is stored in at the moment. In the following example, for instance, folio ii of the program'southward logical retentivity is currently stored in frame iii of physical memory:

Figure 8.10 - Paging hardware

Figure eight.11 - Paging model of logical and concrete memory

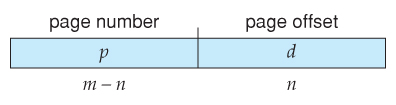

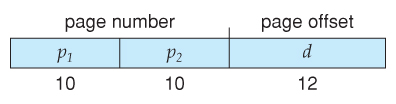

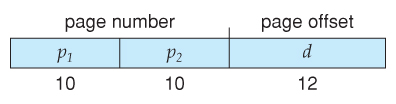

- A logical address consists of two parts: A page number in which the address resides, and an first from the outset of that page. ( The number of bits in the folio number limits how many pages a single process tin address. The number of $.25 in the kickoff determines the maximum size of each page, and should represent to the arrangement frame size. )

- The page table maps the page number to a frame number, to yield a concrete address which too has two parts: The frame number and the offset within that frame. The number of bits in the frame number determines how many frames the system can address, and the number of bits in the offset determines the size of each frame.

- Page numbers, frame numbers, and frame sizes are determined past the architecture, but are typically powers of two, allowing addresses to be split at a certain number of bits. For case, if the logical accost size is 2^m and the page size is 2^due north, then the loftier-society m-due north bits of a logical address designate the folio number and the remaining n bits represent the offset.

- Note also that the number of bits in the page number and the number of $.25 in the frame number do non have to exist identical. The former determines the address range of the logical address space, and the latter relates to the physical address infinite.

- ( DOS used to apply an addressing scheme with xvi bit frame numbers and xvi-chip offsets, on hardware that but supported 24-bit hardware addresses. The event was a resolution of starting frame addresses finer than the size of a single frame, and multiple frame-offset combinations that mapped to the same physical hardware address. )

- Consider the following micro example, in which a process has xvi bytes of logical retention, mapped in iv byte pages into 32 bytes of concrete memory. ( Presumably another processes would exist consuming the remaining 16 bytes of concrete memory. )

Figure eight.12 - Paging example for a 32-byte retentiveness with four-byte pages

- Notation that paging is similar having a table of relocation registers, ane for each page of the logical retentiveness.

- There is no external fragmentation with paging. All blocks of physical memory are used, and there are no gaps in between and no problems with finding the correct sized hole for a item chunk of memory.

- There is, still, internal fragmentation. Memory is allocated in chunks the size of a page, and on the average, the last page will only be half full, wasting on the average half a page of memory per process. ( Possibly more, if processes go along their code and data in divide pages. )

- Larger page sizes waste more memory, but are more efficient in terms of overhead. Modernistic trends accept been to increment page sizes, and some systems fifty-fifty have multiple size pages to endeavour and brand the best of both worlds.

- Page table entries ( frame numbers ) are typically 32 bit numbers, allowing access to 2^32 concrete page frames. If those frames are 4 KB in size each, that translates to 16 TB of addressable physical memory. ( 32 + 12 = 44 bits of physical address space. )

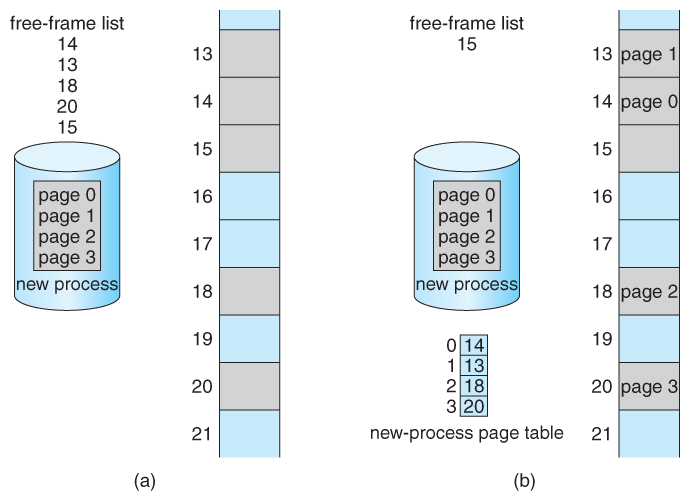

- When a process requests retention ( e.g. when its code is loaded in from disk ), free frames are allocated from a free-frame list, and inserted into that process'south page tabular array.

- Processes are blocked from accessing anyone else's memory because all of their memory requests are mapped through their folio table. At that place is no style for them to generate an address that maps into any other process's retentiveness space.

- The operating system must keep rails of each individual process's page table, updating it whenever the procedure'due south pages go moved in and out of memory, and applying the right folio table when processing system calls for a particular process. This all increases the overhead involved when swapping processes in and out of the CPU. ( The currently agile folio table must be updated to reflect the procedure that is currently running. )

Figure viii.13 - Costless frames (a) earlier resource allotment and (b) after resource allotmentviii.5.2 Hardware Support

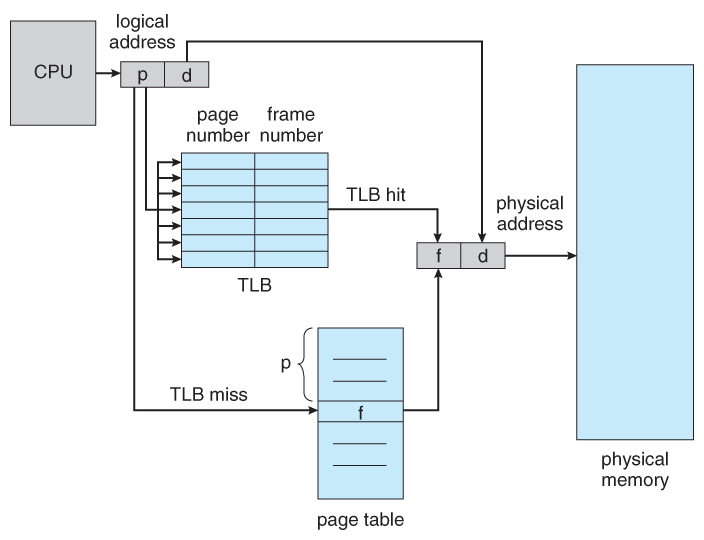

- Page lookups must be washed for every retentivity reference, and whenever a procedure gets swapped in or out of the CPU, its page table must be swapped in and out likewise, along with the instruction registers, etc. Information technology is therefore advisable to provide hardware support for this operation, in society to make it as fast as possible and to brand procedure switches equally fast as possible as well.

- One option is to use a prepare of registers for the page table. For example, the Dec PDP-eleven uses 16-flake addressing and viii KB pages, resulting in only 8 pages per process. ( It takes 13 bits to address 8 KB of offset, leaving only 3 bits to define a page number. )

- An alternate option is to store the page table in main memory, and to use a single register ( called the page-table base of operations register, PTBR ) to record where in retention the folio tabular array is located.

- Process switching is fast, because only the single register needs to exist inverse.

- However retentiveness admission just got half as fast, considering every memory admission at present requires two memory accesses - 1 to fetch the frame number from retentivity and then another i to access the desired memory location.

- The solution to this problem is to use a very special high-speed retention device chosen the translation look-aside buffer, TLB.

- The benefit of the TLB is that it can search an unabridged table for a key value in parallel, and if information technology is found anywhere in the table, then the corresponding lookup value is returned.

Effigy 8.fourteen - Paging hardware with TLB

- The TLB is very expensive, however, and therefore very small. ( Not large enough to concord the entire page table. ) It is therefore used as a enshroud device.

- Addresses are commencement checked against the TLB, and if the info is not there ( a TLB miss ), and so the frame is looked upwards from master memory and the TLB is updated.

- If the TLB is full, and so replacement strategies range from least-recently used, LRU to random.

- Some TLBs allow some entries to be wired downward , which ways that they cannot be removed from the TLB. Typically these would exist kernel frames.

- Some TLBs store accost-space identifiers, ASIDs , to go along track of which procedure "owns" a particular entry in the TLB. This allows entries from multiple processes to be stored simultaneously in the TLB without granting one process admission to some other process'southward memory location. Without this characteristic the TLB has to be flushed make clean with every process switch.

- The percent of time that the desired information is found in the TLB is termed the hitting ratio .

- ( 8th Edition Version: ) For case, suppose that information technology takes 100 nanoseconds to access main memory, and simply 20 nanoseconds to search the TLB. So a TLB hit takes 120 nanoseconds total ( twenty to discover the frame number and then another 100 to go get the data ), and a TLB miss takes 220 ( 20 to search the TLB, 100 to go become the frame number, and so some other 100 to go get the data. ) So with an 80% TLB hit ratio, the average memory access fourth dimension would be:

0.fourscore * 120 + 0.20 * 220 = 140 nanoseconds

for a twoscore% slowdown to get the frame number. A 98% hitting rate would yield 122 nanoseconds average access fourth dimension ( you should verify this ), for a 22% slowdown.

- ( Ninth Edition Version: ) The ninth edition ignores the 20 nanoseconds required to search the TLB, yielding

0.80 * 100 + 0.20 * 200 = 120 nanoseconds

for a 20% slowdown to get the frame number. A 99% hitting rate would yield 101 nanoseconds average access time ( yous should verify this ), for a one% slowdown.

8.five.3 Protection

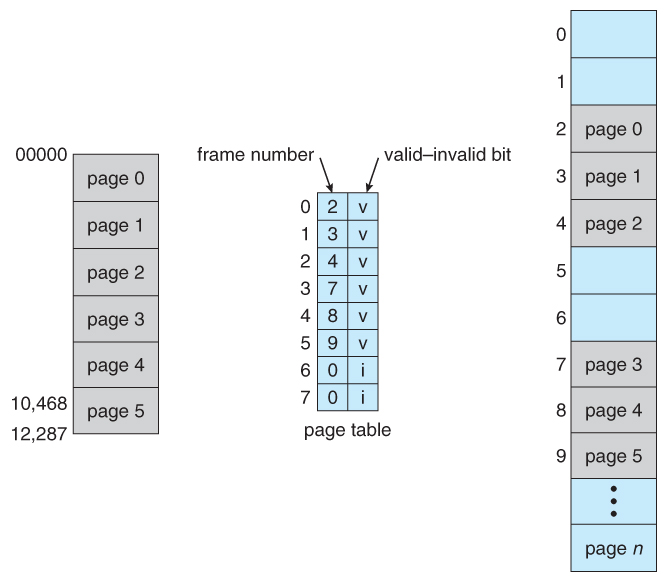

- The page table can also help to protect processes from accessing memory that they shouldn't, or their ain memory in means that they shouldn't.

- A bit or bits can be added to the folio tabular array to classify a page as read-write, read-only, read-write-execute, or some combination of these sorts of things. So each memory reference can exist checked to ensure it is accessing the retentivity in the advisable manner.

- Valid / invalid bits can be added to "mask off" entries in the folio table that are not in utilize past the electric current process, as shown by instance in Effigy viii.12 beneath.

- Note that the valid / invalid $.25 described above cannot block all illegal memory accesses, due to the internal fragmentation. ( Areas of memory in the last page that are non entirely filled by the process, and may incorporate data left over past whoever used that frame last. )

- Many processes do not use all of the page table bachelor to them, particularly in modern systems with very large potential page tables. Rather than waste memory by creating a full-size page table for every process, some systems use a folio-tabular array length register, PTLR , to specify the length of the page table.

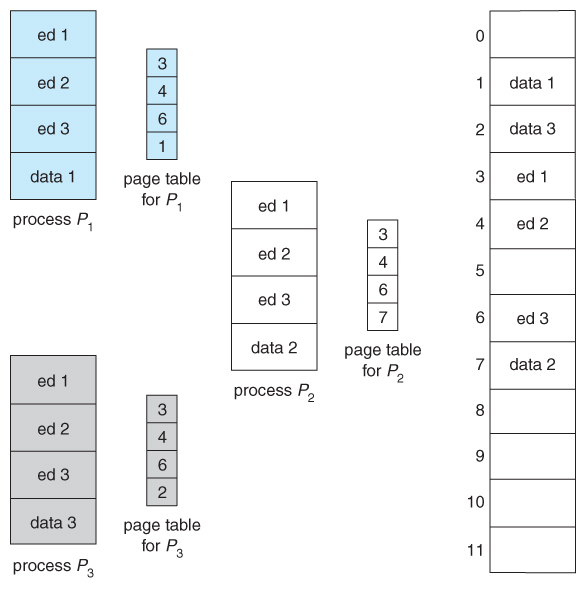

Figure 8.15 - Valid (five) or invalid (i) fleck in page table8.5.4 Shared Pages

- Paging systems tin can make it very like shooting fish in a barrel to share blocks of memory, by simply duplicating page numbers in multiple page frames. This may exist done with either code or information.

- If lawmaking is reentrant , that means that it does not write to or change the code in any way ( it is not self-modifying ), and it is therefore prophylactic to re-enter it. More importantly, it ways the code can exist shared past multiple processes, and then long every bit each has their own re-create of the data and registers, including the teaching register.

- In the example given below, 3 dissimilar users are running the editor simultaneously, but the code is only loaded into retentivity ( in the folio frames ) one fourth dimension.

- Some systems as well implement shared retentivity in this fashion.

Figure 8.16 - Sharing of code in a paging surroundings

8.6 Structure of the Folio Table

8.half dozen.1 Hierarchical Paging

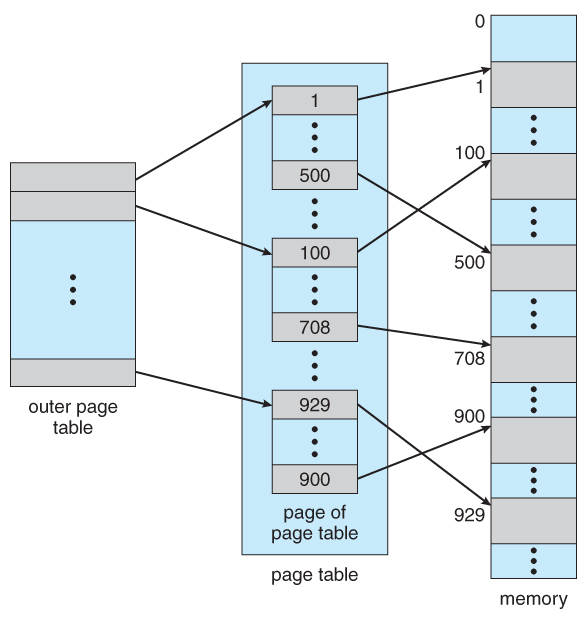

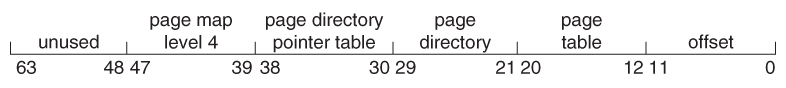

- Most modern computer systems back up logical address spaces of ii^32 to 2^64.

- With a two^32 accost space and 4K ( 2^12 ) page sizes, this go out 2^20 entries in the page table. At 4 bytes per entry, this amounts to a 4 MB folio table, which is too large to reasonably keep in face-to-face retention. ( And to swap in and out of memory with each procedure switch. ) Note that with 4K pages, this would take 1024 pages only to hold the page table!

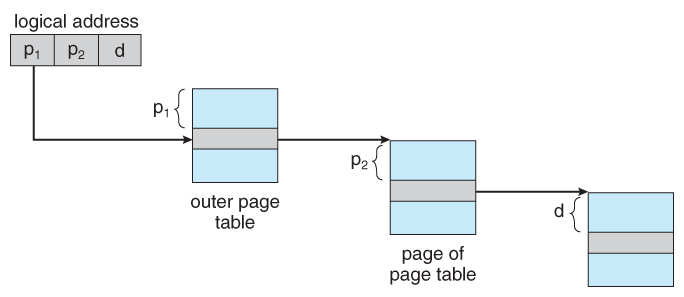

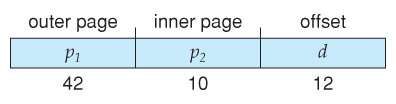

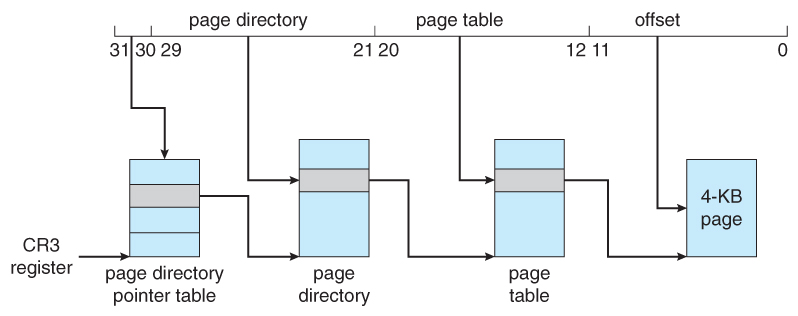

- I pick is to use a two-tier paging system, i.e. to page the page table.

- For example, the 20 bits described above could be cleaved down into two 10-bit folio numbers. The first identifies an entry in the outer page table, which identifies where in memory to find one page of an inner page table. The second 10 bits finds a specific entry in that inner page table, which in plough identifies a particular frame in physical memory. ( The remaining 12 bits of the 32 chip logical accost are the first within the 4K frame. )

Effigy 8.17 A two-level page-table scheme

Figure viii.xviii - Address translation for a ii-level 32-bit paging architecture

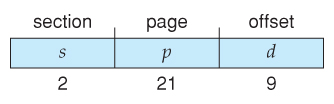

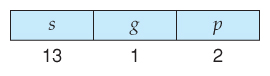

- VAX Architecture divides 32-bit addresses into 4 equal sized sections, and each page is 512 bytes, yielding an address course of:

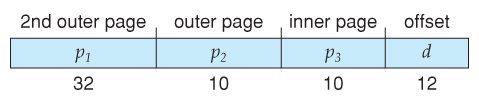

- With a 64-bit logical address space and 4K pages, there are 52 bits worth of page numbers, which is notwithstanding too many even for two-level paging. One could increase the paging level, merely with 10-chip page tables it would take vii levels of indirection, which would be prohibitively dull retentiveness access. So another approach must be used.

64-bits Two-tiered leaves 42 bits in outer tabular array

Going to a 4th level still leaves 32 $.25 in the outer tabular array.

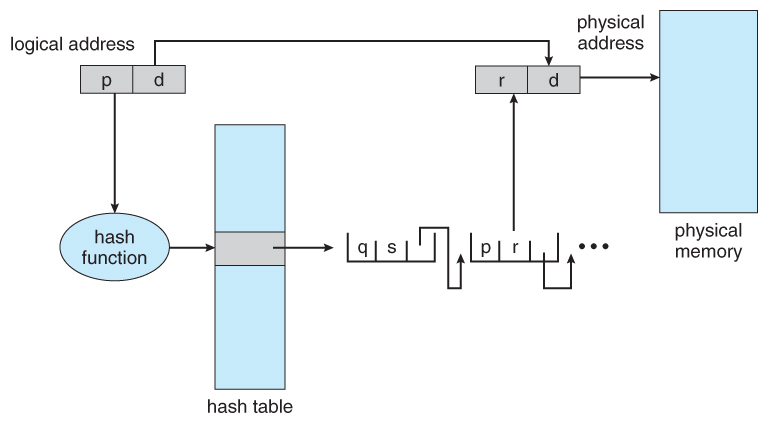

eight.6.2 Hashed Page Tables

- One mutual data structure for accessing information that is sparsely distributed over a broad range of possible values is with hash tables . Figure 8.16 beneath illustrates a hashed page table using concatenation-and-bucket hashing:

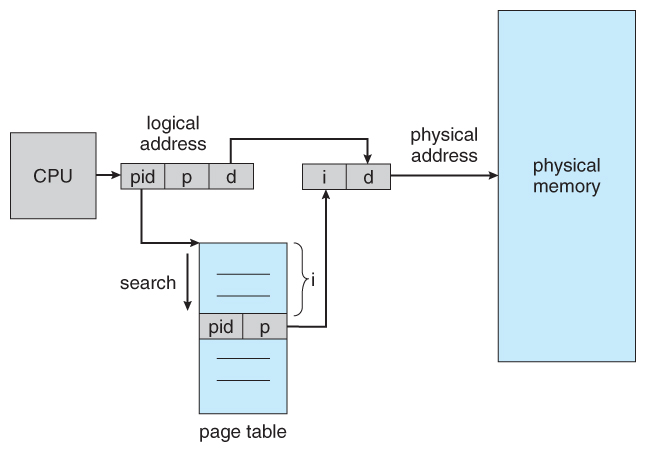

Figure 8.19 - Hashed page table8.6.3 Inverted Page Tables

- Another arroyo is to use an inverted page table . Instead of a table list all of the pages for a particular process, an inverted page table lists all of the pages currently loaded in memory, for all processes. ( I.due east. there is one entry per frame instead of ane entry per page . )

- Access to an inverted page table can be tiresome, as information technology may be necessary to search the entire table in order to find the desired folio ( or to observe that it is non there. ) Hashing the tabular array tin aid speedup the search process.

- Inverted folio tables prohibit the normal method of implementing shared memory, which is to map multiple logical pages to a common physical frame. ( Considering each frame is now mapped to one and only one process. )

Figure 8.twenty - Inverted page table

8.6.4 Oracle SPARC Solaris ( Optional, New Department in 9th Edition )

eight.7 Example: Intel 32 and 64-bit Architectures ( Optional )

eight.7.ane.1 IA-32 Segmentation

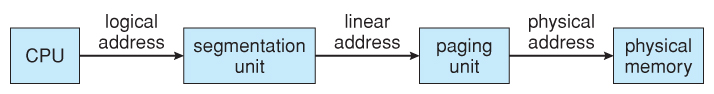

- The Pentium CPU provides both pure segmentation and division with paging. In the latter case, the CPU generates a logical address ( segment-offset pair ), which the partitioning unit converts into a logical linear accost, which in plow is mapped to a physical frame by the paging unit of measurement, equally shown in Effigy viii.21:

Figure 8.21 - Logical to physical address translation in IA-32

viii.7.1.ane IA-32 Sectionalization

- The Pentium compages allows segments to be as large as iv GB, ( 24 bits of start ).

- Processes can have as many equally 16K segments, divided into two 8K groups:

- 8K private to that particular process, stored in the Local Descriptor Table, LDT.

- 8K shared among all processes, stored in the Global Descriptor Table, GDT.

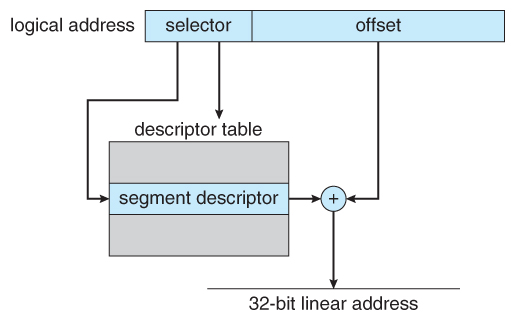

- Logical addresses are ( selector, starting time ) pairs, where the selector is made up of xvi bits:

- A xiii bit segment number ( up to 8K )

- A ane bit flag for LDT vs. GDT.

- 2 bits for protection codes.

- The descriptor tables comprise viii-byte descriptions of each segment, including base and limit registers.

- Logical linear addresses are generated by looking the selector up in the descriptor table and adding the appropriate base address to the offset, as shown in Effigy eight.22:

Figure 8.22 - IA-32 segmentation8.7.1.2 IA-32 Paging

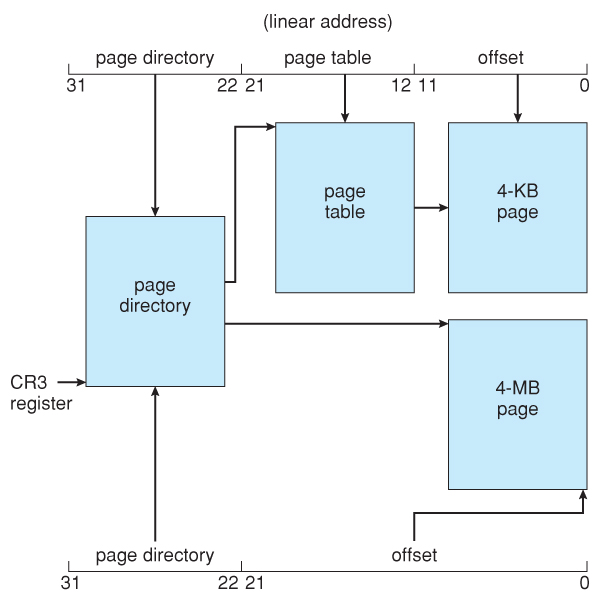

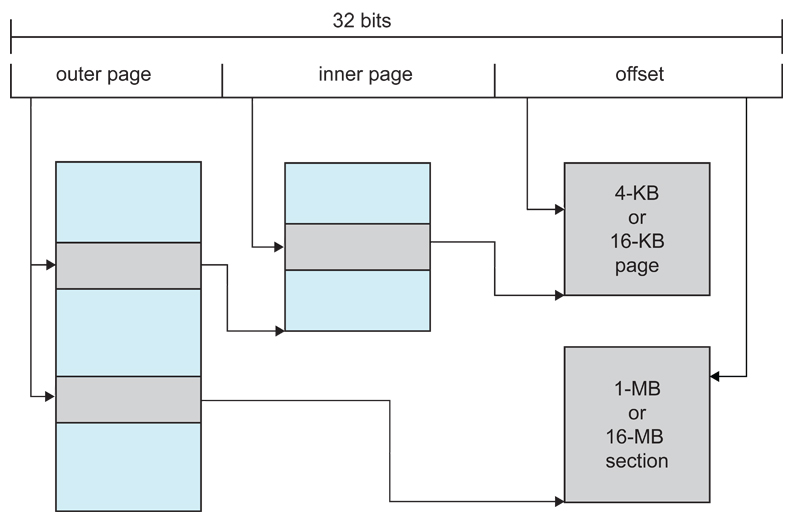

- Pentium paging normally uses a two-tier paging scheme, with the first 10 bits being a page number for an outer page table ( a.k.a. folio directory ), and the next 10 bits existence a folio number within one of the 1024 inner page tables, leaving the remaining 12 bits as an commencement into a 4K page.

- A special bit in the folio directory can indicate that this folio is a 4MB page, in which case the remaining 22 bits are all used as offset and the inner tier of page tables is not used.

- The CR3 register points to the page directory for the current process, as shown in Effigy 8.23 below.

- If the inner page tabular array is currently swapped out to disk, then the page directory will have an "invalid bit" fix, and the remaining 31 bits provide data on where to notice the swapped out folio table on the disk.

Figure 8.23 - Paging in the IA-32 compages.

Figure 8.24 - Page accost extensions.8.7.2 x86-64

Effigy 8.25 - x86-64 linear accost.

eight.8 Example: ARM Architecture ( Optional )

Figure 8.26 - Logical address translation in ARM.

Sometime 8.seven.3 Linux on Pentium Systems - Omitted from the Ninth Edition

- Because Linux is designed for a wide diversity of platforms, some of which offer merely express back up for division, Linux supports minimal partition. Specifically Linux uses only 6 segments:

- Kernel lawmaking.

- Kerned information.

- User code.

- User information.

- A task-land segment, TSS

- A default LDT segment

- All processes share the aforementioned user lawmaking and information segments, because all process share the same logical address infinite and all segment descriptors are stored in the Global Descriptor Table. ( The LDT is more often than not not used. )

- Each process has its own TSS, whose descriptor is stored in the GDT. The TSS stores the hardware state of a procedure during context switches.

- The default LDT is shared by all processes and generally non used, merely if a process needs to create its own LDT, information technology may do so, and utilise that instead of the default.

- The Pentium compages provides 2 $.25 ( iv values ) for protection in a segment selector, but Linux only uses two values: user mode and kernel fashion.

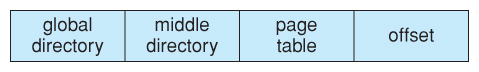

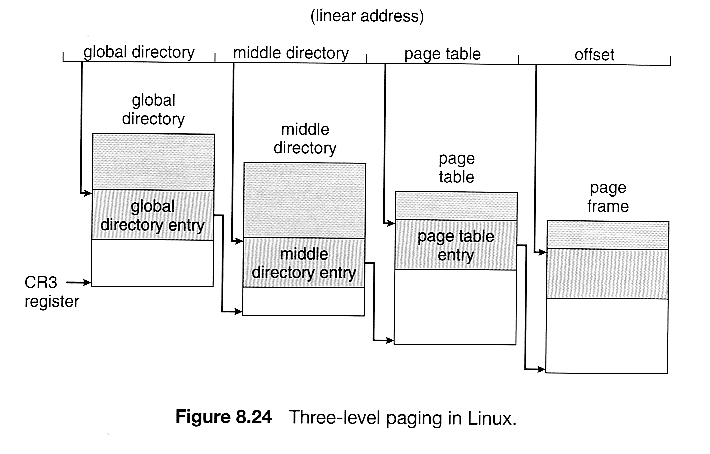

- Because Linux is designed to run on 64-bit as well as 32-bit architectures, information technology employs a iii-level paging strategy equally shown in Figure viii.24, where the number of bits in each portion of the accost varies by architecture. In the case of the Pentium architecture, the size of the middle directory portion is set to 0 bits, effectively bypassing the middle directory.

8.8 Summary

-

( For a fun and piece of cake caption of paging, you may want to read about The Paging Game. )

How Large Are The Memory Registers In A 32-bit Os?,

Source: https://www.cs.uic.edu/~jbell/CourseNotes/OperatingSystems/8_MainMemory.html

Posted by: moorebobtly.blogspot.com

0 Response to "How Large Are The Memory Registers In A 32-bit Os?"

Post a Comment